Use AI to Determine, Not Just Infer: Why Declarative AI Matters

Article

Use AI to Determine, Not Just Infer: Why Declarative AI Matters for Regulated Institutions

AI companies are racing to convince you that their models are smart enough to figure out your business. However, most enterprise AI deployments quietly fail in the gap between that promise and what regulated institutions actually need — a gap that declarative AI is built to close.

You’ve probably sat through a compelling AI demo. Their model answers fluently, summarizes documents, and generates reports that look like what your team spends hours producing by hand.

But then someone in the room asks whether it knows how you define a primary banking relationship. They ask whether it applies your credit policy thresholds the same way every time, and what you’d show a regulator who questioned a decision it made. Those are the questions that separate AI that looks good from AI that works for your institution, in your regulatory environment, at the stakes you’re operating under.

The Flaw in Model-Centric AI

A growing number of AI vendors are building what are called model-centric systems, on the premise that a sufficiently capable model given enough of your data will figure out your business. The models are genuinely impressive, but model intelligence isn’t what solves the problem these institutions face.

Every regulated institution — community bank, credit union, company running enterprise IT under compliance requirements — operates on institutional knowledge that is declared rather than discovered. Your definition of a criticized asset, your risk rating thresholds, and your rules for what triggers a relationship review aren’t patterns hidden in your data waiting for a model to find them. They are decisions your institution has made, codified in policy, and required to be applied consistently across every loan review, compliance filing, and customer interaction.

When a model-centric AI system tries to apply your institutional logic, it doesn’t read your policy manual and execute it. It infers what your logic probably is, based on patterns in your data and whatever context you’ve fed it at the moment of the query. Every answer is a probabilistic approximation of a declarative truth.

That level of approximation is acceptable for marketing copy, but not for a credit decision, a regulatory disclosure, or a risk report going to your board.

Declarative AI vs. Inferential: The Distinction That Changes Everything

There are two fundamentally different ways to make an AI system work:

Inferential AI asks the model to reason its way to the right answer using whatever data and context you provide, making the model itself the intelligence layer. In theory, a better model produces better output. In practice, the model’s output varies based on how a question is phrased, what context was retrieved, and what version of the model is running, so there is no single authoritative answer, only the current best inference.

Declarative AI encodes your institutional logic into the data foundation before the model ever sees it, expressing your definitions, rules, and thresholds as an explicit, governed data architecture. The model doesn’t need to infer what “aggregate calendar-year deposits” means, because your intelligence layer has already defined and computed it. The job of the model is to reason over a foundation of established fact rather than construct that foundation on the fly.

For companies in regulated industries, it’s the difference between an AI system you can stand behind and one you can only hope doesn’t embarrass you in front of an examiner.

Why "Better Models" Aren’t the Solution

The standard vendor response is that models are getting better fast, and soon they’ll handle institutional complexity reliably. Models are improving rapidly, but improvement doesn’t resolve the declarative vs. inferential problem. A more capable model makes better guesses; it doesn’t turn guesses into facts. Your credit policy isn’t a pattern to be discovered at higher confidence levels. It’s a decision to be applied with complete consistency.

Governance is the dimension that will eventually land on a CEO or CIO’s desk personally. SR 11-7 and similar guidance require your AI systems to be explainable and auditable, which means when an examiner asks why a decision was made, “the model reasoned its way to this answer” isn’t a defense — it’s an admission. A governed rule with documented provenance is something you can put in front of a regulator, a board risk committee, or your own general counsel. Model weights are not.

There’s also a cost structure dimension that matters more the longer you run the system. Model-centric AI is a variable cost that scales with usage: every query, every user, every new workflow adds to the bill, and the more your institution embraces AI, the faster the number grows. Platform-centric AI is closer to a fixed cost you build once, where the marginal cost of additional use is near zero. Per-token prices will keep falling, but they won’t close this gap, because the volume of tokens required to re-derive your institutional context at query time doesn’t compress. By year three, the two architectures produce very different numbers on your P&L.

The Integration Problem Nobody Talks About

There’s a harder truth underneath all of this that the AI demos never address: most enterprise AI deployments fail not because the model isn’t good enough, but because the data isn’t ready.

Your customer records live in one system, transaction history lives in another, and loan origination data lives in a third. None of those systems were designed to talk to each other, and none of them have consistent definitions of shared concepts. “Customer” means something different in your core banking platform, your CRM, and your treasury management system.

Getting an AI model to reason accurately over that environment isn’t a prompt engineering challenge; it’s a data engineering one. It is the part most AI programs systematically underestimate. Resolving customer identity across a core banking platform, a CRM, and a treasury system, reconciling how Fiserv or Jack Henry structures accounts against your own definitions, and maintaining those definitions through core upgrades and acquisitions requires years of domain-specific work. When an AI initiative stalls or comes in over budget, this is almost always where it happened.

This is the work most AI vendors skip. They show you what the model can do once someone else has solved the data problem. They leave the data problem to you.

The data foundation is the moat — not because it’s expensive to build, but because it takes years to do right and it’s specific to your institution. When a competitor promises to replicate it with a smarter model, they’re proposing to shortcut a decade of domain-specific engineering. That’s not a technical claim. It’s a sales claim.

Three Things to Require Before You Commit Budget

If you’re a CEO or CIO evaluating AI investments, there are three things worth requiring of any vendor before you commit budget.

Require that your institutional logic lives in the data layer, not in the model or the prompt. Your definitions and business rules should be explicit, governed, and independent of the model, so they survive vendor changes, model upgrades, and staff turnover. If a vendor can’t show you where that logic lives, you’re being asked to store your institution’s intelligence inside someone else’s product.

Require a clear model-upgrade path that doesn’t put your institutional knowledge at risk. In a model-centric architecture, a model upgrade can invalidate the logic encoded in the current model, forcing you to revalidate your AI every time the vendor ships a release. In a platform-centric one, the intelligence layer is model-independent and the model is a swappable component. Ask your vendor to explain their upgrade path.

Require that every AI-supported decision be defensible to a regulator on its own terms. You should be able to point to the rule itself — when it was authored, what data it depends on, what it produces — not a description of what the AI probably did. If a vendor can’t produce that, you’re the one who will be asked to explain it.

Before deploying any AI agent or generative capability into a regulated workflow, verify that the underlying data is trustworthy, governed, and AI-ready, with resolved customer identity, codified business definitions, and derived intelligence maintained as standing metrics rather than computed on demand. Build incrementally, but anchor the roadmap on what architecture serves your institution in year three, not what you can show in thirty days. And insist on model independence, so that as foundation models improve, you benefit from the improvement without having to revalidate your institutional logic.

The AI companies competing for your budget are offering real capability, and the models are improving, but model capability is increasingly a commodity. What isn’t a commodity is a declarative AI foundation — governed, institution-specific, and built to give every model you deploy established fact to reason from. That foundation is what separates AI that works in a boardroom presentation from AI that works at 8 AM on a Monday, when your banker needs to know who to call, why it matters, and what to say — and needs to be sure it’s right.

Aunalytics

Aunalytics is a data and AI company helping financial institutions use their data to drive deposit growth and engagement. By transforming their data into intelligence, we help teams grow deposits, enhance member relationships, and increase efficiency. Aunalytics provides software, infrastructure, and data strategy advice, guiding every step of your journey.

AI is Only As Good As Your Data

Article

AI is Only As Good As Your Data

Every week, another AI vendor promises their platform will transform your financial institution. Better member insights, smarter lending decisions, and automated reporting. The pitch is compelling and the pressure to act is real.

Before you sign a contract, there’s a question worth asking: Do you actually have the data to back it up?

AI is only as good as the data underneath it. And most financial institutions don’t have the data that’s ready for AI yet.

The key is starting with your data foundation first.

For financial institutions, the challenge isn’t the amount of data, it’s the data readiness. When you skip the step of cleaning and structuring your data and go straight to the AI layer, here’s what happens:

- The AI produces answers that feel authoritative but are statistically probable, rather than being declaratively accurate.

- You can’t audit the decision: you don’t know why it said what it said.

- You keep running the same calculations over and over, driving up costs with every query.

This isn’t a tech failure. It’s a sequencing failure. The intelligence has to be built into the data before you hand it to an AI.

What "AI-Ready Data" Actually Means

AI-ready data has been transformed, enriched with business logic, and structured so that when a question is asked, the answer is calculated, not guessed.

Think of it this way: if you ask an AI to tell you which members are at risk of leaving this quarter, it needs more than raw transaction records. It needs a unified view of each member’s relationship with your institution, behavioral signals over time, and the business rules your team uses to define “at risk” in the first place. That context must be built in.

The intelligence is in the platform. You must build it into the data layer before AI can deliver answers you can trust and act on.

Two Approaches and Why They're Not Equal

Approach One: Ask the AI to Figure It Out

Some vendors take raw data, often pulled from a cloud warehouse, and let the AI model do the calculations on the fly. The model ingests your data, runs its analysis, and returns an answer.

This sounds efficient. It’s not. Every calculation runs repeatedly, consuming tokens and compute resources with each query. Costs scale with usage, not with value. And when you ask, “why did you flag this member?” the answer is a statistical distribution, not a reason.

Approach Two: Pre-Compute the Intelligence

The more effective approach, and the one Aunalytics is grounded in, is to do the hard work before the AI ever sees the question. Every relevant metric, every business rule, every behavioral signal is calculated, validated, and stored in a structured intelligence layer.

When a question comes in, the AI retrieves a precise answer from data that was already prepared for it. The result is faster, cheaper, more accurate, and fully auditable.

This is what we mean when we say Aunalytics makes data AI-ready.

What This Means for Your Institution

If you’re a CEO, CIO, or CTO at a financial institution, this distinction matters for three reasons:

- Accuracy: Declarative answers built on prepared data are more reliable than probabilistic outputs from raw data. When a banker acts on an insight, they need to trust it.

- Auditability: Regulators and examiners want to know why a decision was made. With pre-computed intelligence, you can show your work. With probabilistic AI, you can’t.

- Cost: Paying for compute on every query — at scale — adds up fast. Pre-computed data means you’re paying for results, not repeated calculations.

The Partner Question

Most community financial institutions don’t have the data science teams, the infrastructure, or the time to build this foundation themselves. They don’t need to.

But they do need a partner who’s already done the work — one who understands community banking deeply and can deliver production-ready AI data as a service.

That’s not a software tool. It’s not a dashboard. It’s a managed service built on years of experience working with the specific data structures, core systems, and regulatory environment of community banks and credit unions.

Aunalytics has been building and refining banking-specific data sets for over eight years. The Intelligent Data Warehouse isn’t a general-purpose platform adapted for banking. It was built for banking from the ground up.

Before you evaluate the next AI platform, ask the vendor one question:

What does your solution do to prepare my data for AI before the AI ever touches it?

The answer will tell you everything.

Start With the Right Foundation

The institutions that will win with AI aren’t the ones who adopt it fastest. They’re the ones who build the right foundation first — and find a partner who can help them get there without building a data science department from scratch.

Aunalytics

Aunalytics is a data and AI company helping financial institutions use their data to drive deposit growth and engagement. By transforming their data into intelligence, we help teams grow deposits, enhance member relationships, and increase efficiency. Aunalytics provides software, infrastructure, and data strategy advice, guiding every step of your journey.

Turning Missed Moments into Meaningful Connections in Community Banking

Article

Turning Missed Moments into Meaningful Connections:

How AI Drives Deposit Growth by Amplifying the Human Touch in Community Banking

Community banks and credit unions have always had a competitive edge: deep, trusted relationships with their customers and members. But in today’s environment, where digital expectations meet lean staffing and fragmented systems, even these institutions face a new kind of challenge.

Large banks are investing billions in AI to replicate what community banks do naturally — build relationships. According to Citibank, 93% of financial institutions expect AI to improve profits within five years, potentially unlocking $170 billion in industry-wide gains by 2028.

Yet the real issue facing community-based financial institutions isn’t just technology. It’s a quiet acceptance of the limits of human capacity — and the tolerance of inefficiency.

The Core Challenge: Banking Has Normalized Missed Opportunities

Most community financial institutions today have accepted that front-line staff can only do so much. Customer-facing personnel like branch managers, private bankers, and relationship bankers are responsible for hundreds, sometimes thousands, of customer relationships. With each client holding multiple accounts and generating thousands of transactions, it’s become operationally impossible to deliver the kind of proactive, personalized service that defines the brand of community banking. Without the tools to monitor every customer’s needs in a timely manner, they’re often limited to engaging only with the individuals who walk into the branch or proactively reach out to the bank or credit union.

The Result: A Culture of Firefighting

A customer quietly transfers funds to a competitor — and no one follows up.

A member switches jobs, prompting financial changes that go unnoticed due

to a lack of timely alerts.

A well-connected team member at a large local employer with referral potential is never identified as an influencer.

These moments aren’t missed due to lack of care or intention. They’re missed because banks and credit unions have had to accept the limits of their current staffing models and tools. Hiring enough employees to cover every opportunity would be cost-prohibitive. So, institutions settle for staffing formulas that prioritize coverage

over connection.

But what if you didn’t have to choose?

The Opportunity: Use AI to Scale Personal Service Without Scaling Headcount

That’s where Aunalytics comes in. Their solutions enable front-line staff to engage with all of their customers — not just those who raise their hands — by surfacing key activity signals and recommending the right time and message to connect. It empowers every banker to be in the right place, at the right time, which ultimately can lead to a net increase in core deposits. Rather than accepting that proactive service is too expensive, Aunalytics uses AI to unlock it at scale. It analyzes transactional and CRM data to uncover key relationship signals and delivers them directly to the banker or credit union professional. No combing through dashboards. No digging. Just timely, actionable insights tailored to each role.

Now, community-based financial institutions can identify critical relationship moments before they’re lost — retaining deposits, strengthening loyalty, and generating new business without increasing headcount.

Furthermore, Aunalytics goes beyond delivering a software solution by offering a strategic partnership that includes hands-on guidance and deep industry expertise. Every engagement includes a dedicated team of data engineers and analytics experts who guide implementation and support long-term success. This hands-on approach ensures institutions are not left to interpret or operationalize AI insights on their own.

The Critical First Step: An Intelligent Data Warehouse

The most important component of all AI systems is the quality and structure of the data itself, and the data model referenced to generate answers and perform humanlike tasks. Without a solid data foundation, AI efforts often struggle with fragmented, inconsistent, or incomplete data, limiting their effectiveness and scalability. Therefore, the first step is to create a reliable knowledge base to serve as the source of truth. In order to facilitate impeccable accuracy and traceability, Aunalytics has developed an Intelligent Data Warehouse and data model specific to community-based financial institutions to set their data and analytics initiatives up for success.

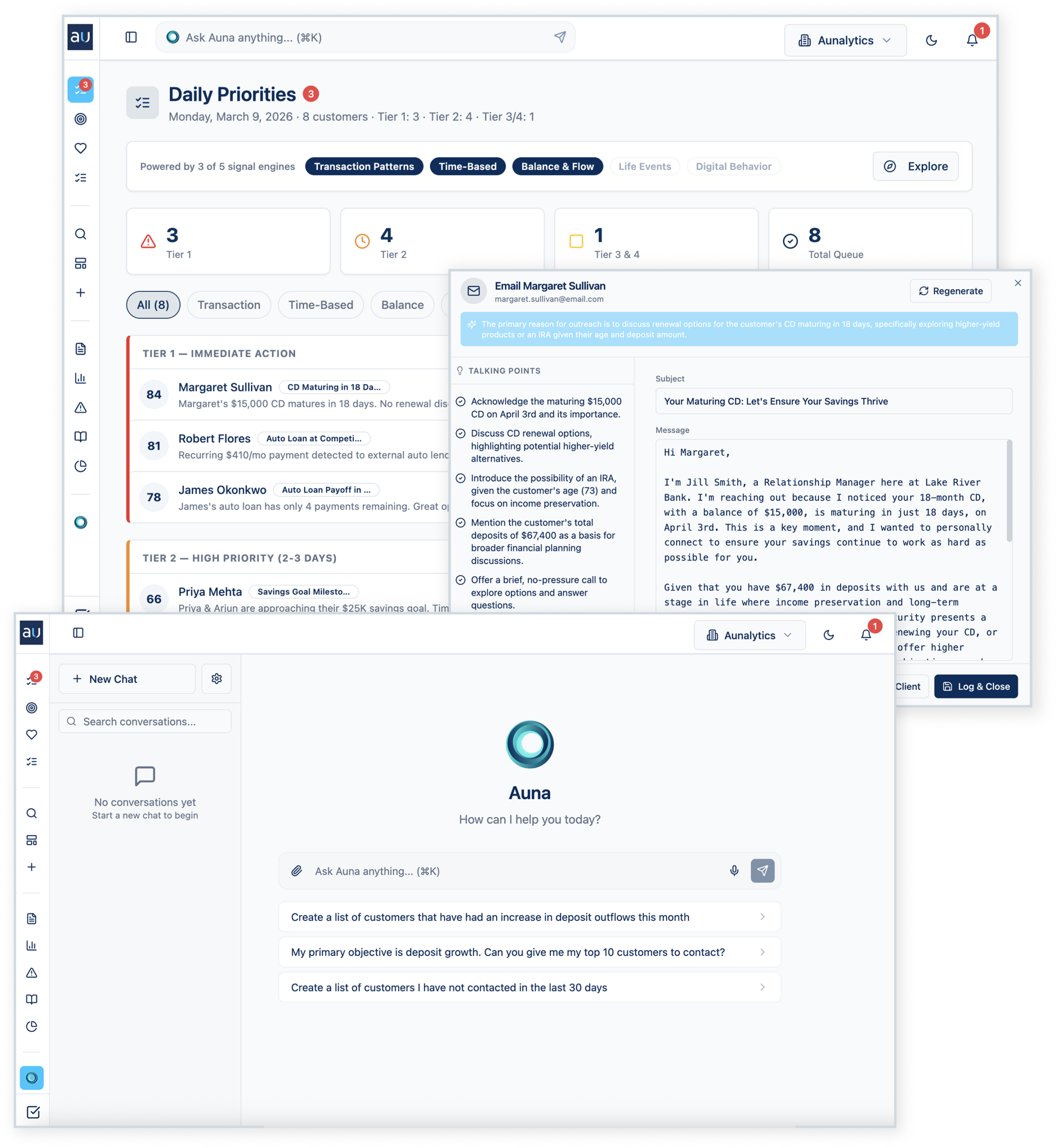

Auna: Insights That Find You

Auna is designed to help mid-sized financial institutions do work that drives growth. This approach shifts institutions from reactive responders into proactive relationship-builders, without requiring more people or more hours. Some of its main components are:

Daily Priorities: Instead of relationship managers pulling reports or digging through their email, Auna scans thousands of transactions from the last 24 hours, cross-references them against months of trend data, runs them against your institution’s playbook, and surfaces the five things you need to do today.

Personalized Outreach: Auna not only surfaces who should be contacted and when, but it takes it a step further and generates a personalized outreach message on the spot.

Conversational Chat: A private, natural language interface that allows any staff member to query near real-time data — no technical expertise required.

Examples in Action

- Retention: A high-value client moves a large sum to a competitor. Auna detects the transaction and prompts immediate outreach.

- Engagement: A shift in direct deposits signals a life transition. Staff receive an alert to check in and support the customer.

- Acquisition: A potential advocate is identified based on network or employer data, prompting the launch of a referral playbook.

Designed for Action, Not Analysis

Traditional BI tools often lead to “dashboard fatigue,” where the sheer abundance of data fails to drive business outcomes and the analytics are underused or overlooked. Auna elevates the focus from analysis to action. By surfacing just the insights that matter, right when they matter, bankers can spend precious time on relationships, not reporting. Even a few hours saved per week per employee compounds into hundreds of hours redirected to higher value activity across the institution.

Unlike dashboards that sit unused, Auna’s notifications are consistently read and acted upon. And the natural language chat feature ensures no question is too complex or too technical to answer.

A Modern Strategy for a Human-Centered Mission

Community financial institutions shouldn’t be forced to choose between digital efficiency and human connection. With Auna, they can have both. AI becomes an extension of their relationship model — empowering staff to drive growth by acting on what matters, when it matters, at a scale previously impossible. Because in a world of automation, relationships still win — and now, they can win at scale.

Tracy Graham

Tracy Graham is the co-founder of Aunalytics, a data and AI company that equips community banks and credit unions with the data foundation and AI execution to transform how they operate.

The Efficiency Ratio Problem No One Is Actually Solving

Article

The Efficiency Ratio Problem No One Is Actually Solving

Every year, we attend banking conferences and hear advice echoed from stage after stage: “Get your data together to use AI.” It’s become a mantra in the industry. Everyone agrees it’s important, and then most go home and nothing meaningfully changes.

Data matters. And the leaders I talk to know it matters. The part that many miss today is understanding what “getting your data together” really means. That gap, between the slogan and the substance, is good news. It means the biggest opportunity to improve your efficiency ratio is still in front of you.

The Advice Everyone Gives but Nobody Finishes

When most people talk about getting your data together, they mean integration. Pull it from your core, your loan origination system, your CRM, your general ledger, and get it into one place, maybe a dashboard. Consolidated reporting is better than fragmented reporting, but if the end goal is using artificial intelligence to drive your efficiency ratio, then integration is step two of a ten-step journey.

The good news? The next steps are clearer than you might think.

What “getting your data together” really means is something far more granular and far more valuable. It means refining your data. It means embedding business context, encoding the business logic of your specific bank. It means having your data ready to use AI. Once you understand that distinction, the path forward becomes surprisingly actionable.

What Is a Data Foundation, Really?

A properly built data foundation is the unlock to using AI. It’s the key to doing the work you already do, but quicker, easier, and smarter.

Context matters because an LLM is extraordinary at processing language, identifying patterns, and generating output. What it cannot do, on its own, is understand that when your bank says, “Primary Relationship” or “Class 3 commercial real estate,” it means something slightly different than when the institution next door says the same thing. It doesn’t know your policy exceptions, committee preferences, or the dozen small decisions your best banker makes without even thinking about them.

That’s business logic. And every bank’s logic is different, which is a strength. Your institutional knowledge is a competitive asset. The data foundation doesn’t just store your data. It teaches AI how your bank actually works, preserving and scaling the expertise your team has built over years.

The Credit Memo Example

Take credit memo writing. It’s the example that illustrates the opportunity clearly, and once you see it, the same pattern shows up across the institution.

A credit memo is a structured synthesis of financial data, borrower history, market context, policy compliance, and risk assessment. It’s organized in a way that tells a clear story to a credit committee. It requires knowledge, context, and consistency.

A commercial banker and credit analyst might spend hours on a single credit memo. Not because the intellectual work is that complex, but because the assembly is. Pulling data from multiple systems, cross-referencing financials, ensuring the narrative aligns with current policy, formatting it correctly, reviewing it for completeness. Your bankers shouldn’t be spending their best hours on assembly.

Now the exciting question: what is stopping AI from writing that credit memo?

Frontier LLMs can synthesize, analyze, and write at extraordinary levels. What’s stopping them is context and business logic. The AI doesn’t know your bank’s specific credit policy. It doesn’t know how your committee likes to see deals presented. It doesn’t know that your chief credit officer always wants to see the debt service coverage calculated a certain way, or that your institution has a specific appetite for owner-occupied CRE that differs from the industry norm.

But if you’ve built the data foundation, if you’ve done the important work of refining your data, embedding your context, and encoding your logic, then an AI agent can draft that memo in your format, with your logic. The commercial banker reviews it, applies judgment, and moves on to the next relationship. The work that took hours now takes minutes. Multiply that across your team, across your branches, across a year, and you’re looking at a meaningful shift in your efficiency ratio from a single AI capability: writing credit memos.

A Framework for Doing This

So what does this look like in practice? I’d suggest a framework that starts with the work, rather than the tech.

Decompose. Pick a role: commercial banker, credit analyst, branch manager, compliance officer. Map the activities that role performs. Be specific. Instead of “lending,” think “spreading financials from tax returns.” Instead of “compliance,” think “reviewing BSA alerts and documenting decisions.” You’ll be surprised how clarifying this exercise is.

Categorize. Which of those activities require judgment, relationships, or creativity? Which are information processing: data retrieval, synthesis, pattern matching, structured output? When you free your people from assembly work, they can focus on the high-value activities that drew them to banking in the first place.

Build the foundation. This is the work that matters most and gets talked about least. Refine your data. Contextualize it. Encode your business logic. Build the data infrastructure that turns your raw institutional knowledge into something an AI can reason with. This is a strategic investment, and it’s the step that separates institutions using AI from institutions talking about AI.

Deploy with precision. Start with one AI agent — conversational, accessible, embedded in your workflow — that takes on one specific, high-leverage activity. Credit memo drafting. Loan covenant monitoring. Exception tracking. Whatever generates the most time savings for the most people. Prove the value, build trust, then expand.

The Efficiency Ratio Is a Lagging Indicator of a Leading Decision

Every CEO I talk to cares about their efficiency ratio. It’s one of the clearest measures of how well an institution converts revenue into profit. But here’s what I’d encourage every leader reading this to consider: the efficiency ratio of the future won’t be driven by the same levers as the efficiency ratio of the past.

It will be driven by how effectively your people are equipped to do their work. It will be driven by whether your institution has built the data infrastructure to let AI do what AI is uniquely qualified to do, so your people can do what they’re uniquely qualified to do. The institutions that invest in foundational, context-rich data work now will operate at a level of efficiency that sets them apart.

That’s the future driver of efficiency ratio. It’s not a dashboard. It’s not a chatbot bolted onto your website. It’s a data foundation that knows your bank as well as your best banker does, and an AI layer that puts that knowledge to work, every day, at scale.

The opportunity is here. The path is clear. And the institutions that move now will be the ones that define what community banking looks like for the next generation.

Tracy Graham

Tracy Graham is the co-founder of Aunalytics, a data and AI company that equips community banks and credit unions with the data foundation and AI execution to transform how they operate.

How State and Local Governments Can Use Technology to Overcome Economic Challenges

How State and Local Governments Can Use Technology to Overcome Economic Challenges

At present, state and local governments are confronted with significant challenges stemming from the current state of the economy. This includes a decrease in tax revenues, sustained high inflation, and a shortage of proficient IT personnel, who are vital to their day-to-day operations. Industry experts consider technology as an effective solution to address inadequacies during challenging economic periods.

Fill out the form below to receive a link to the article.

Aunalytics is a data platform company. We deliver insights as a service to answer your most important IT and business questions.

Security Maturity Improvement is Imperative as Cyberattack Risks Remain High

Security Maturity Improvement is Imperative as Cyberattack Risks Remain High

While advancing technology offers significant benefits, it has also made it easier for those who seek to gain an advantage by exploiting others. An attack can be devastating for any business and impact it for many years to come—today’s organizations need to move toward security maturity by utilizing multiple lines of defense against cybercrime.

Fill out the form below to receive a link to the article.

Aunalytics is a data platform company. We deliver insights as a service to answer your most important IT and business questions.

Cyber Insurance Continues to Skyrocket—Do You Have a Security Strategy in Place?

Cyber Insurance Continues to Skyrocket—Do You Have a Documentable Security Strategy in Place to Show You’re Prepared?

Cyber risk is a growing critical concern for organizations of all sizes and public entities globally, as we continue to rely on information technology and digital devices. But in the wake of steadily rising digital threats, cyber insurance is getting increasingly expensive—and difficult—for companies to procure.

Fill out the form below to receive a link to the article.

Aunalytics is a data platform company. We deliver insights as a service to answer your most important IT and business questions.

Bridging the Mid-Market Talent Gap for Digital Transformation

Bridging the Mid-Market Talent Gap for Digital Transformation

To achieve business value from data technology investments, mid-market companies need the right technical expertise and talent. Yet many mid-market firms push this onto their IT manager, assuming that since it is technology related, IT has it. This is a mistake because most IT departments do not have time for data analytics. They are busy full time keeping company systems stable and secure, and providing support to your team members. This by necessity results in IT deprioritizing data queries over crucial cybersecurity attack prevention. Business analysts and executives get frustrated waiting for data query results, and the data is stale or the business opportunity has passed by the time query results are in.

But even if your IT team had time for it, it still is a mistake to rely on traditional technology administrators for data analytics success. This is unless your IT department has expertise across a wide range of skill sets, from cloud architecture, database engineering, master data management, data quality, data profiling, and data cleansing. What’s more, your IT manager would need to have command over data integration, data ingestion, data preparation, data security, regulatory compliance, data science, and building pipelines of data ready for executive reporting from multiple cloud and on premises environments.

When you read this laundry list of needs, it becomes clear that most mid-market IT departments lack the specialized experts needed to derive business value from their data. Unlike larger enterprises that have the resources to hire skilled staff for these roles, the mid midsize organization requires another option that provides access to the right tools, resources, and support. One that integrates, enriches and is trained in utilizing AI, machine learning, and predictive analytics to achieve more useful results.

To read more, please fill out the form below:

4 Questions Mid-market Companies Should Ask Themselves About Data Protection

4 Questions Mid-market Companies Should Ask Themselves About Data Protection

How safe is cloud security, which now often relies on “zero trust” security principles based on a user’s location rather than user credentials? While some worry that cloud security is less reliable than on-premise security, that’s not actually the case, particularly for mid-market businesses. The fact is that your data is actually more secure in a remote data center managed by security experts than by your in-house IT team.

You may feel a false sense of security by having your IT department guard your servers in a closet — but this strategy is extremely risky when it comes to data protection. It’s not standard for mid-market IT departments to possess expert skills in cloud security and data security, which are needed to properly safeguard data. Many mid-market companies, particularly those not in highly regulated industries, do not currently have Security Operations Centers.

To read more, please fill out the form below:

Lowering Cybersecurity Insurance Premiums with Managed Security Services

Lowering Cybersecurity Insurance Premiums with Managed Security Services

Midmarket organizations face the threat of cyberattacks that put every organization at great risk. As a result, a greater number of IT professionals are turning to managed security services to lower cybersecurity insurance premiums.